Software Must Be Secure by Design, and Artificial Intelligence Is No Exception

Discussions of artificial intelligence (AI) often swirl with mysticism regarding how an AI system functions. The reality is far more simple: AI is a type of software system.

And like any software system, AI must be Secure by Design. This means that manufacturers of AI systems must consider the security of the customers as a core business requirement, not just a technical feature, and prioritize security throughout the whole lifecycle of the product, from inception of the idea to planning for the system’s end-of-life. It also means that AI systems must be secure to use out of the box, with little to no configuration changes or additional cost.

AI is powerful software

The specific ways to make AI systems Secure by Design can differ from other types of software, and some best practices for safety and security practices are still being fully defined. Additionally, the manner in which adversaries may choose to use (or misuse) AI software systems will undoubtedly continue to evolve – issues that we will explore in a future blog post. However, fundamental security practices still apply to AI software.

AI is software that does fancy data processing. It generates predictions, recommendations, or decisions based on statistical reasoning (precisely, this is true of machine learning types of AI). Evidence-based statistical policy making or statistical reasoning is a powerful tool for improving human lives. Evidence-based medicine understands this well. If AI software automates aspects of the human process of science, that makes it very powerful, but it remains software all the same.

Software should be built with security in mind

CEOs, policymakers, and academics are grappling with how to design safe and fair AI systems, and how to establish guardrails for the most powerful AI systems. Whatever the outcome of these conversations, AI software must be Secure by Design.

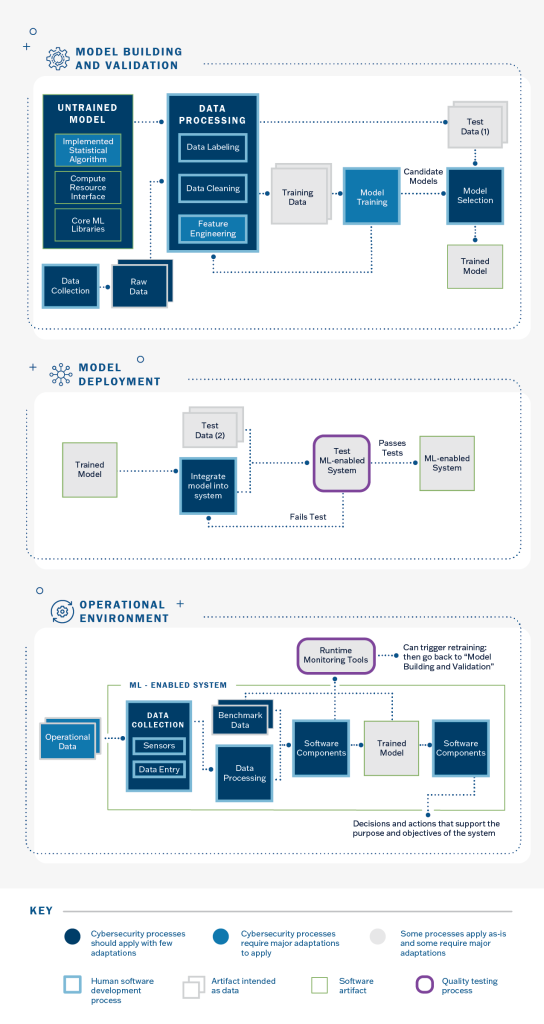

AI software design, AI software development, AI data management, AI software deployment, AI system integration, AI software testing, AI vulnerability management, AI incident management, AI product security, and AI end-of-life management – for example – all should apply existing community-expected security practices and policies for broader software design, software development, etc. AI engineering continues to take on too much technical debt where they have avoided applying these practices. As the pressure to adopt AI software system increases, developers will be pressured to take on technical debt rather than implement Secure by Design principles. Since AI is the “high interest credit card” of technical debt, it is particularly dangerous to choose shortcuts rather than Secure by Design.

Some aspects of AI, such as data management, have important operational differences with expected practices for other software types. Some security practices will need to be augmented to account for AI considerations. The AI engineering community should start by applying existing security best practices. Secure by Design practices are a foundation on which other guardrails and safety principles depend. Therefore, the AI engineering community should be encouraged to integrate or apply these Secure-by-Design practices starting today.

AI community risk management

Secure by Design “means that technology products are built in a way that reasonably protects against malicious cyber actors successfully gaining access to devices, data, and connected infrastructure.” Secure by Design software is designed securely from inception to end-of-life. System development life cycle risk management and defense in depth certainly applies to AI software. The larger discussions about AI often lose sight of the workaday shortcomings in AI engineering as related to cybersecurity operations and existing cybersecurity policy. For example, systems processing AI model file formats should protect against untrusted code execution attempts and should use memory-safe languages. The AI engineering community must institute vulnerability identifiers like Common Vulnerabilities and Exposures (CVE) IDs. Since AI is software, AI models – and their dependencies, including data – should be capturedinsoftware bills of materials. The AI system should also respect fundamental privacy principles by default.

CISA understands that once these standard engineering, Secure-by-Design and security operations practices are integrated into AI engineering, there are still remaining AI-specific assurance issues. For example, adversarial inputs that force misclassification can cause cars to misbehave on road courses or hide objects from security camera software. These adversarial inputs that force misclassifications are practically different from standard input validation or security detection bypass, even if they’re conceptually similar. The security community maintains a taxonomy of common weaknesses and their mitigations – for example, improper input validation is CWE-20. Security detection bypass through evasion is a common issue for network defenses such as intrusion detection system (IDS) evasion.

AI-specific assurance issues are primarily important if the AI-enabled software system is otherwise secure. Adversaries already have well-established practices to exploit an AI system with exposed known-exploited vulnerabilities in the non-AI software elements. With the example of adversarial inputs that force misclassifications above, the attacker’s goal is to change the model’s outputs. Compromising the underlying system also achieves this goal. Protecting machine learning models is important, but it is also important that the traditional parts of the system are isolated and secured. Privacy and data exposure concerns are more difficult to assess – given model inversion and data extraction attacks, a risk-neutral security policy would restrict access to any model at the same level as one would restrict access to the training data.

Goal - AI system assurance

AI is a critical type of software, and attention on AI system assurance is well-placed. Although AI is just one among many types of software systems, AI software has come to automate processes crucial to our society, from email spam filtering to credit scores, from internet information retrieval to assisting doctors find broken bones in x-ray images. As AI grows more integrated into these software systems and the software systems automate these and other aspects of our lives, the importance of AI software that is Secure by Design grows as well. This is why CISA will continue to urge technology providers to ensure AI systems are Secure by Design – every model, every system, every time.